|

IF YOU ARE HAVING A PROBLEM

- Take a look at the logs in

C:\Program Files\CodeProject\AI\logs and see if there's anything in there that screams 'something broke'.

- Check the FAQs in the CodeProject.AI Server documentation

- Make sure you've tested the server using the Explorer (blue link, top middle of the dashboard) to ensure it's a server issue rather than something else such as Blue Iris or another app using CodeProject.AI server.

- If there's no obvious answer, then copy and paste into a message the contents of the System Info tab, describe what you are doing, and what you see, and what you would expect.

Always include a copy and paste from the System Info tab of the dashboard. It gives us a ton of info on your setup. If an individual module is failing, click the 'Info' button to the right of the module's name in the status list and copy and paste that info too.

How to reinstall a module

Option 1. Go to the install modules tab on the dashboard and try re-installing the package. Make sure you have enough disk space and a reliable internet connection.

Option 2: (Option 1 with a vengeance): If that fails, head to the module's folder ([app root]\modules\module-id), open a terminal in admin mode, and run ..\..\setup. This will force a manual reinstall using the install script.

Docker: In Docker you will need to open a terminal into the docker container. You can do this using Docker Desktop, or Visual Studio Code with the Docker remote extension, or on the command using using docker attach. Then do a cd /app/modules/module-id where module-id is the id of the module you need to resinstall. Next, run sudo bash ../../setup.sh --verbosity info to force a manual reinstall of that module. (Set verbosity as quiet, info or loud to get less or more info)

cheers

Chris Maunder

modified 18-Feb-24 15:48pm.

|

|

|

|

|

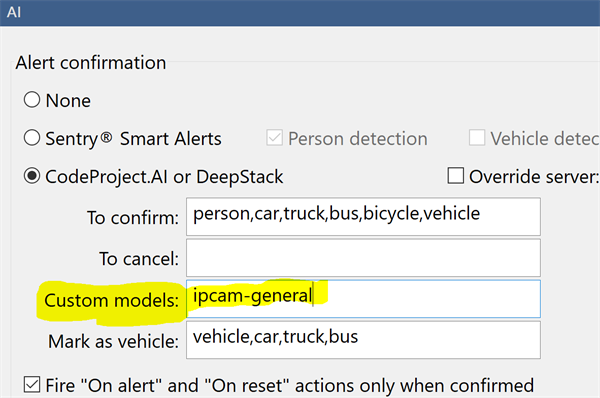

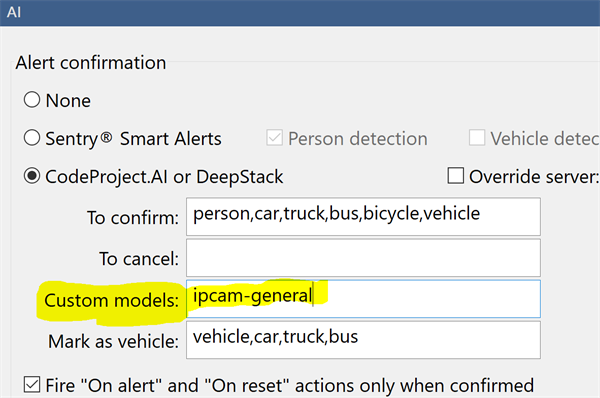

If you are a Blue Iris user and you are using custom models, then you would notice that the option, in Blue Iris, to set the custom model location is greyed out. This is because Blue Iris does not currently make changes to CodeProject.AI Server's settings. It can be done by manually starting CodeProject.AI with command line parameters (not a great solution), or editing the module settings files (a little messy), or setting system-wide environment variables (way easier). For version 1.6 we added an API to allow any app to change our settings programmatically, and we take care of stopping/restarting things and persisting the changes.

So: Blue Iris doesn't currently change CodeProject.AI Server's settings, so it doesn't provide you a way to change the custom model folder location from within Blue Iris.

Blue Iris will still use the contents of this folder to determine the calls it makes. If you don't specify a model to use in the Custom Models textbox, then Blue Iris will use all models in the custom models folder that it knows about.

Here we've specified a specific model to use. The Blue Iris help file explains more about how this works, including inclusive and exclusive filters on the models it finds.

CodeProject.AI Server doesn't know about Blue Iris' folder, so it can't tell what models it may be expected to use, nor can it tell Blue Iris about what models CodeProject.AI server has available. Our API allows Blue Iris to get a list of the AI models installed with CodeProject.AI Server, and also to set the folder where these models reside. But Blue Iris doesn't, yet, use that API.

So we do a hack.

At install time we sniff the registry to find where Blue Iris thinks the custom models should be. We then make empty copies of the models that we have, and copy them into that folder. If the folder doesn't exist (eg you were using C:\Program Files\CodeProject\AI\AnalysisLayer\CustomObjectDetection\assets, which no longer exists) then we create that folder, and then copy over the empty files.

When Blue Iris looks in that folder to decide what custom calls it can make, it sees the models, notes their names, and uses those names in the calls. CodeProject.AI Server has those models, so when the calls come through we can process them.

Blue Iris doesn't use the models. It uses the list of model names.

If you have your own models in the Blue Iris folder

You will need to copy them to the CodeProject.AI server's custom model folder (by default this is C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\custom-models)

If you've modified the registry and have your own custom models

If you were using a folder in C:\Program Files\CodeProject\AI\AnalysisLayer\CustomObjectDetection\ (which no longer existed after the upgrade, but was recreated by our hack) you'll need to re-copy your custom model into that folder.

The simplest solutions are:

- Modify the registry (Computer\HKEY_LOCAL_MACHINE\SOFTWARE\Perspective Software\Blue Iris\Options\AI, key 'deepstack_custompath') so Blue Iris looks in

C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\custom-models for custom models, and copy your models into there.

or

- Modify

C:\Program Files\CodeProject\AI\AnalysisLayer\ObjectDetectionYolo\modulesettings.json file and set CUSTOM_MODELS_DIR to be whatever Blue Iris thinks the custom model folder is.

cheers

Chris Maunder

|

|

|

|

|

Fresh install of 2.6.5. Getting this error when starting a Large YoloV8 Model with Coral. It seemingly works fine but switches over from TPU to CPU silently off and on (even though the error states it's using CPU)

00:43:45:Module 'Object Detection (Coral)' 2.2.2 (ID: ObjectDetectionCoral)

00:43:45:Valid: True

00:43:45:Module Path: <root>\modules\ObjectDetectionCoral

00:43:45:Module Location: Internal

00:43:45:AutoStart: True

00:43:45:Queue: objectdetection_queue

00:43:45:Runtime: python3.9

00:43:45:Runtime Location: Local

00:43:45:FilePath: objectdetection_coral_adapter.py

00:43:45:Start pause: 1 sec

00:43:45:Parallelism: 16

00:43:45:LogVerbosity:

00:43:45:Platforms: all

00:43:45:GPU Libraries: installed if available

00:43:45:GPU: use if supported

00:43:45:Accelerator:

00:43:45:Half Precision: enable

00:43:45:Environment Variables

00:43:45:CPAI_CORAL_MODEL_NAME = YOLOv8

00:43:45:CPAI_CORAL_MULTI_TPU = True

00:43:45:MODELS_DIR = <root>\modules\ObjectDetectionCoral\assets

00:43:45:MODEL_SIZE = large

00:43:45:

00:43:45:Started Object Detection (Coral) module

00:43:47:objectdetection_coral_adapter.py: TPU detected

00:43:47:objectdetection_coral_adapter.py: Attempting multi-TPU initialisation

00:43:47:objectdetection_coral_adapter.py: Supporting multiple Edge TPUs

00:46:30:objectdetection_coral_adapter.py: ERROR:root:TFLite file C:\Program Files\CodeProject\AI\modules\ObjectDetectionCoral\assets\tf2_ssd_mobilenet_v1_fpn_640x640_coco17_ptq_segment_0_of_2_edgetpu.tflite doesn't exist

00:46:30:objectdetection_coral_adapter.py: WARNING:root:Model file not found: [Errno 2] No such file or directory: 'C:\\Program Files\\CodeProject\\AI\\modules\\ObjectDetectionCoral\\assets\\tf2_ssd_mobilenet_v1_fpn_640x640_coco17_ptq_segment_0_of_2_edgetpu.tflite'

00:46:30:objectdetection_coral_adapter.py: WARNING:root:No Coral TPUs found or able to be initialized. Using CPU.

00:46:30:objectdetection_coral_adapter.py: WARNING:root:Unable to load delegate for TPU cpu: Failed to load delegate from edgetpu.dll

00:46:30:objectdetection_coral_adapter.py: WARNING:root:Unable to create interpreter for CPU using edgeTPU library: cpu

00:46:30:objectdetection_coral_adapter.py: ERROR:root:TFLite file C:\Program Files\CodeProject\AI\modules\ObjectDetectionCoral\assets\tf2_ssd_mobilenet_v1_fpn_640x640_coco17_ptq_segment_0_of_2_edgetpu.tflite doesn't exist

00:46:30:objectdetection_coral_adapter.py: WARNING:root:Model file not found: [Errno 2] No such file or directory: 'C:\\Program Files\\CodeProject\\AI\\modules\\ObjectDetectionCoral\\assets\\tf2_ssd_mobilenet_v1_fpn_640x640_coco17_ptq_segment_0_of_2_edgetpu.tflite'

00:46:30:objectdetection_coral_adapter.py: WARNING:root:No Coral TPUs found or able to be initialized. Using CPU.

00:46:30:objectdetection_coral_adapter.py: WARNING:root:Unable to load delegate for TPU cpu: Failed to load delegate from edgetpu.dll

00:4

Server version: 2.6.5

System: Windows

Operating System: Windows (Microsoft Windows 11 version 10.0.22631)

CPUs: AMD Ryzen 9 3950X 16-Core Processor (AMD)

1 CPU x 16 cores. 32 logical processors (x64)

GPU (Primary): NVIDIA GeForce RTX 3060 (12 GiB) (NVIDIA)

Driver: 555.85, CUDA: 12.2.140 (up to: 12.5), Compute: 8.6, cuDNN: 8.5

System RAM: 64 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 8.0.3

.NET SDK: 8.0.203

Default Python: Not found

Go: Not found

NodeJS: 21.7.2

Rust: Not found

Video adapter info:

NVIDIA GeForce RTX 3060:

Driver Version 32.0.15.5585

Video Processor NVIDIA GeForce RTX 3060

System GPU info:

GPU 3D Usage 31%

GPU RAM Usage 6.3 GiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

Looks like the model file isn’t getting parsed correctly for your 2-TPU system. It looks like you’ve requested the YOLOv8 model but it’s looking for the MobileNet files for some reason. I don’t have much insight into how that process works I’m afraid.

As a temporary workaround, try the medium model size?

|

|

|

|

|

I've had a computer running Windows 10 w/version 2.5.4 great. I decided to upgrade to Windows 11. That may have been a complete mistake but please try to help me anyway. Of course I also updated to version 2.6.2, so I have changed two variables at once, so yes, I may be an idiot, but please try to help me anyway.

The good news is that CodeProject still works, BUT the status window only shows "CPU (DirectML)" rather than "GPU (DirectML)" using the YOLOv5Net version. Further below, I am pasting the "System Info" information from both before and after at the bottom of this post. I do note that the format has changed in 2.6.2 and show, "Runtimes installed" which shows that the .Net SDK is not installed, but I don't believe I had that installed before so that doesn't seem to be the problem, but let me know if I'm wrong about that.

I have looked at the logs, and don't see any indication of a problem. In fact, I see "GPU enabled: enabled" in the logs. I will include a screenshot of that here, with the logging turned up to "trace" prior to restarting.

I am fully aware that the status window did not always update to show GPU usage until after some actual use, even with 2.5.4, so I thought that perhaps might be the problem, but even after running some test images, the status window still shows "CPU":

Perhaps the only issue is a problem with the status window in 2.6.2? Any ideas? Does "GPU enabled: enabled" indicate the GPU is being used, or is the status window correct that CPU is still being used? For what it's worth, this is a screenshot of it working with Windows 10 and 2.5.4. I really did have it all working great, which makes this even more frustrating if this was self-inflicted damage, and I cannot tell whether it's a difference in 2.6.2 or with Windows 11.

Before (Windows 10, 2.5.4)

Server version: 2.5.4

System: Windows

Operating System: Windows (Microsoft Windows 10.0.19045)

CPUs: AMD Ryzen 9 5900HX with Radeon Graphics (AMD)

1 CPU x 8 cores. 16 logical processors (x64)

GPU (Primary): Microsoft Remote Display Adapter (Microsoft)

Driver: 10.0.19041.4355

System RAM: 31 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

.NET framework: .NET 7.0.5

Default Python:

Video adapter info:

Microsoft Remote Display Adapter:

Driver Version 10.0.19041.4355

Video Processor

AMD Radeon(TM) Graphics:

Driver Version 31.0.12027.9001

Video Processor AMD Radeon Graphics Processor (0x1638)

System GPU info:

GPU 3D Usage 7%

GPU RAM Usage 514.3 MiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

After (Windows 11, 2.6.2)

Server version: 2.6.2

System: Windows

Operating System: Windows (Microsoft Windows 11 version 10.0.22631)

CPUs: AMD Ryzen 9 5900HX with Radeon Graphics (AMD)

1 CPU x 8 cores. 16 logical processors (x64)

GPU (Primary): AMD Radeon(TM) Graphics (512 MiB) (Advanced Micro Devices, Inc.)

Driver: 31.0.21912.14

System RAM: 15 GiB

Platform: Windows

BuildConfig: Release

Execution Env: Native

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.19

.NET SDK: Not found

Default Python: Not found

Go: Not found

NodeJS: Not found

Rust: Not found

Video adapter info:

AMD Radeon(TM) Graphics:

Driver Version 31.0.21912.14

Video Processor AMD Radeon Graphics Processor (0x1638)

System GPU info:

GPU 3D Usage 2%

GPU RAM Usage 514.8 MiB

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

|

|

|

|

|

I downloaded CodeProject.AI-Server-win-x64-2.6.2 and ran the install. The process was done within one minue and said successful. However, the service never started, there was no log file in /program files/codeproject/ai. The Codeproject dashboard at http://localhost:32168/ loaded but the sever window read "Unable to contact AI Server" and the "info" tab had no information.

I was able to install CodeProject.AI.Server-1.6.7.0.

I have tried several times with no luck. Each time I uninstalled the prior version and deleted codeproject directories in Program Files and Program Data.

I was able to install on another Windows 10 computer with no problems. Very frustrating.

Here are some of the system info screens.

OS Name Microsoft Windows 10 Pro

Version 10.0.19045 Build 19045

Other OS Description Not Available

OS Manufacturer Microsoft Corporation

System Name POWERSPEC-B743

System Manufacturer MicroElectronics

System Model B743

System Type x64-based PC

System SKU 984096

Processor Intel(R) Core(TM) i7-9700K CPU @ 3.60GHz, 3601 Mhz, 8 Core(s), 8 Logical Processor(s)

BIOS Version/Date American Megatrends Inc. P1.10A, 9/11/2019

SMBIOS Version 3.1

Embedded Controller Version 255.255

BIOS Mode UEFI

BaseBoard Manufacturer ASRock

BaseBoard Product Z390 Phantom Gaming 4S/ac

BaseBoard Version

Platform Role Desktop

Secure Boot State Off

PCR7 Configuration Elevation Required to View

Windows Directory C:\WINDOWS

System Directory C:\WINDOWS\system32

Boot Device \Device\HarddiskVolume5

Locale United States

Hardware Abstraction Layer Version = "10.0.19041.3636"

User Name Not Available

Time Zone Eastern Daylight Time

Installed Physical Memory (RAM) 32.0 GB

Total Physical Memory 31.7 GB

ZES_ENABLE_SYSMAN 1 <SYSTEM>

windir %SystemRoot% <SYSTEM>

VBOX_MSI_INSTALL_PATH C:\Program Files\Oracle\VirtualBox\ <SYSTEM>

USERNAME SYSTEM <SYSTEM>

TMP %SystemRoot%\TEMP <SYSTEM>

TMP %USERPROFILE%\AppData\Local\Temp NT AUTHORITY\SYSTEM

TMP %USERPROFILE%\AppData\Local\Temp POWERSPEC-B743\jrswa

TEMP %SystemRoot%\TEMP <SYSTEM>

TEMP %USERPROFILE%\AppData\Local\Temp NT AUTHORITY\SYSTEM

TEMP %USERPROFILE%\AppData\Local\Temp POWERSPEC-B743\jrswa

QTJAVA C:\Program Files (x86)\QuickTime\QTSystem\QTJava.zip <SYSTEM>

PSModulePath %ProgramFiles%\WindowsPowerShell\Modules;%SystemRoot%\system32\WindowsPowerShell\v1.0\Modules <SYSTEM>

PROCESSOR_REVISION 9e0d <SYSTEM>

PROCESSOR_LEVEL 6 <SYSTEM>

PROCESSOR_IDENTIFIER Intel64 Family 6 Model 158 Stepping 13, GenuineIntel <SYSTEM>

PROCESSOR_ARCHITECTURE AMD64 <SYSTEM>

PATHEXT .COM;.EXE;.BAT;.CMD;.VBS;.VBE;.JS;.JSE;.WSF;.WSH;.MSC <SYSTEM>

Path C:\Program Files\Common Files\Oracle\Java\javapath;C:\Windows\system32;C:\Windows;C:\Windows\System32\Wbem;C:\Windows\System32\WindowsPowerShell\v1.0\;C:\Windows\System32\OpenSSH\;C:\Program Files (x86)\NVIDIA Corporation\PhysX\Common;%SystemRoot%\system32;%SystemRoot%;%SystemRoot%\System32\Wbem;%SYSTEMROOT%\System32\WindowsPowerShell\v1.0\;%SYSTEMROOT%\System32\OpenSSH\;C:\Program Files\PuTTY\;C:\Program Files\Intel\Intel(R) Memory and Storage Tool\;C:\Program Files (x86)\QuickTime\QTSystem\;C:\Program Files\OpenVPN\bin <SYSTEM>

Path %USERPROFILE%\AppData\Local\Microsoft\WindowsApps; NT AUTHORITY\SYSTEM

Path C:\Users\jrswa\AppData\Local\Programs\Python\Launcher\;%USERPROFILE%\AppData\Local\Microsoft\WindowsApps; POWERSPEC-B743\jrswa

OS Windows_NT <SYSTEM>

OneDriveConsumer C:\Users\jrswa\OneDrive POWERSPEC-B743\jrswa

OneDrive C:\Users\jrswa\OneDrive POWERSPEC-B743\jrswa

NUMBER_OF_PROCESSORS 8 <SYSTEM>

DriverData C:\Windows\System32\Drivers\DriverData <SYSTEM>

configsetroot %SystemRoot%\ConfigSetRoot <SYSTEM>

ComSpec %SystemRoot%\system32\cmd.exe <SYSTEM>

CLASSPATH .;C:\Program Files (x86)\QuickTime\QTSystem\QTJava.zip

John

modified 18hrs ago.

|

|

|

|

|

I have this exact same problem.

|

|

|

|

|

Is there anything in C:\Program Files\CodeProject\AI?

If so, can you go to C:\Program Files\CodeProject\AI\server, open a terminal, and in the terminal type CodeProject.AI.Server.exe (and ENTER) to launch the server?

Let us know what you see in the terminal, and whether the dashboard works.

cheers

Chris Maunder

|

|

|

|

|

Chris,

Thank you, that got me up and running. The first time I ran CodeProject.AI.Server.exe I got a the message: "You must install .NET to run this application" with a link to down that software. I downloaded and installed the .NET runtime.

A second execution of CodeProject.AI.Server.exe yielded the message "You must install or update .NET to run this application" with a link to download that software.

I did so and then CodeProject appears to have loaded the service and I can get to the Dashboard. Blue Iris also recognizes CodeProject. I have yet to try and do anything with CodeProject but I am a lot further than before.

Thank you very much for your quick response.

|

|

|

|

|

Hi, trying to setup CodeProject.AI (2.6.4, latest release available) for use with object detection and the Google Coral USB.

Two issues:

1. When I try to install the Coral module, I get "Error in Install ObjectDetectionCoral: 404" in the web ui. Nothing in the logs, even with setting install verbosity to "loud". Any other way of installing?

2. (Not sure if important): YOLOv5 6.2 keeps throwing errors. It couldn't be installed, because torch couldn't be found/installed. Had the same issue with Facerecognition, but was able to uninstall facerecognition.

16:52:12:Started Object Detection (YOLOv5 6.2) module

16:52:12:detect_adapter.py: Traceback (most recent call last):

16:52:12:detect_adapter.py: File "/usr/bin/codeproject.ai-server-2.6.4/modules/ObjectDetectionYOLOv5-6.2/detect_adapter.py", line 20, in

16:52:12:detect_adapter.py: from detect import do_detection

16:52:12:detect_adapter.py: File "/usr/bin/codeproject.ai-server-2.6.4/modules/ObjectDetectionYOLOv5-6.2/detect.py", line 7, in

16:52:12:detect_adapter.py: import torch

16:52:12:detect_adapter.py: ModuleNotFoundError: No module named 'torch'

16:52:12:Module ObjectDetectionYOLOv5-6.2 has shutdown

I really only want to run the Coral module, so not sure if #2 is even relevant for me.

|

|

|

|

|

|

Thanks for getting back to me!

I've re-installed on a fresh Ubuntu 22.04 machine - and am still getting the 404 error.

FYI, I've noted below the errors I received when installing, although I'm not sure that these are in any way related to the 404 error.

Initial installation (dpkg -i):

Installing jq...

E: Could not get lock /var/lib/apt/lists/lock. It is held by process 2090 (apt-get)

E: Unable to lock directory /var/lib/apt/lists/

Scanning processes...

No services need to be restarted.

No containers need to be restarted.

No user sessions are running outdated binaries.

No VM guests are running outdated hypervisor (qemu) binaries on this host.

Doesn't appear to have a negative impact that I can see right now, but FYI.

Upon the first start, when installing the various modules:

Infor FaceProcessing: Installing Python 3.8

Error FaceProcessing: E: Problem renaming the file /var/cache/apt/pkgcache.bin.inP5CH to /var/cache/apt/pkgcache.bin - rename (2: No such file or directory)

Error FaceProcessing: W: You may want to run apt-get update to correct these problems

Error FaceProcessing: E: The package cache file is corrupted

Could I somehow get log entries on what is being tried to install the Coral module which results in the 404 error, as this error is only shown in the webif, but cannot be found in the logs?

|

|

|

|

|

You'll need to sudo to install the server.

cheers

Chris Maunder

|

|

|

|

|

Yes, I ran all of the install steps as root and also started the server upon the initial launch as root from the command line to see if there are any additional log entries there.

|

|

|

|

|

I searched around and couldn't find anything. I just upgraded to 2.6.2, running in Docker on an Ubuntu VM (Proxmox). Have been running 2.1.1 successfully for quite a while now. I'm using with BlueIris.

Detection seems to be working, but I'm getting these spurious errors about "catdog_m.pt" in the logs that have me a bit perplexed. I've searched around and can't find anything. Any ideas? I ask because I am hoping to get better detection of my dog, who is a fairly large breed and is often identified as a "person" on my backyard cameras. Thanks in advance!

17:10:29:Object Detection (YOLOv5 6.2): /app/preinstalled-modules/ObjectDetectionYOLOv5-6.2/custom-models/catdog_m.pt does not exist

17:10:29:Object Detection (YOLOv5 6.2): Unable to create YOLO detector for model catdog_m

17:10:29:Object Detection (YOLOv5 6.2): Detecting using catdog_m

17:10:29:Object Detection (YOLOv5 6.2): /app/preinstalled-modules/ObjectDetectionYOLOv5-6.2/custom-models/catdog_m.pt does not exist

17:10:29:Response rec'd from Object Detection (YOLOv5 6.2) command 'custom' (...7ae351)

17:10:29:Object Detection (YOLOv5 6.2): Unable to create YOLO detector for model catdog_m

|

|

|

|

|

Do you have the catdog_m.pt file in the /app/preinstalled-modules/ObjectDetectionYOLOv5-6.2/custom-models/ directory in the docker container?

cheers

Chris Maunder

|

|

|

|

|

Thanks Chris.

Oddly, catdog_m was showing up in the BlueIris list under the AI settings right after I upgraded the Docker container to 2.6.2, along with some others like 'best'.

However, for some odd reason, I checked it again the next day and the 'catdog_m' and other odd models disappeared from the list of custom models in BlueIris! The custom model list now just shows the typical 'actionnetv2' and the various 'ipcam' models. No changes on my part, it just self-resolved.

Either way, I ended up working around this by changing all my cameras in BI to use the 'ipcam-general' model, which ends up giving me faster detection time anyway. I've been meaning to do that anyway!

Thanks for such a great tool!

|

|

|

|

|

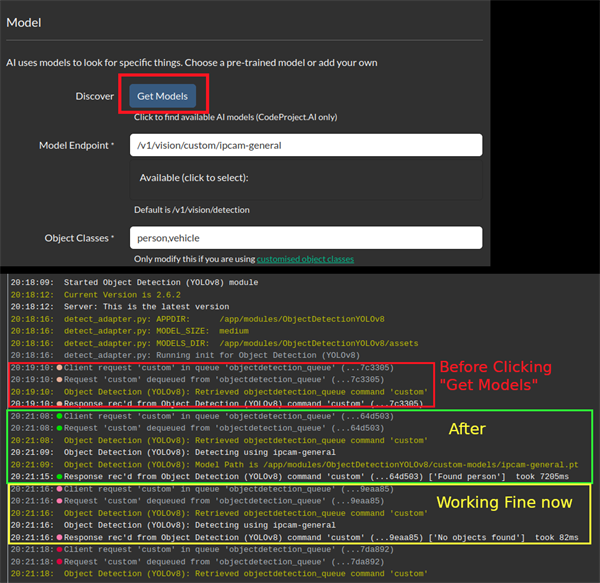

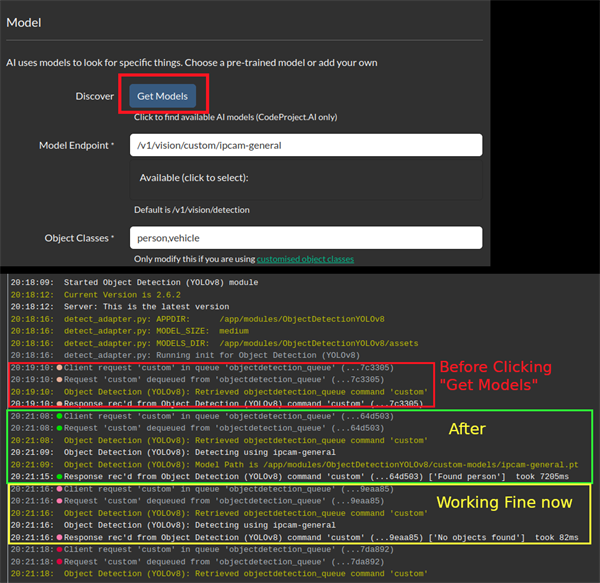

I'm using ipcam-general with Agent DVR but CPAI doesn't seem to load the custom model automatically after a restart.

Logs say:

SetFailed: Message from CPAI: No custom models found at CoreLogic.AI.ObjectRecognizer.Detect()

So Object recognition doesn't work until I click a "Get Models" button on Agent DVR (not sure what this actually triggers).

https://old.reddit.com/r/ispyconnect/comments/1cxj135/settings_bug_after_restart/[^]

modified 3 days ago.

|

|

|

|

|

Thanks very much for your message. Just to help narrow it down a bit, could you please share your System Info tab from your CodeProject.AI Server dashboard? Also, does this happen if you use other Object Detection modules?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

This is just a problem right after a reboot. If I choose the default model it works fine.

Server version: 2.6.2

System: Docker (be3d4b306206)

Operating System: Linux (Ubuntu 22.04)

CPUs: Intel(R) Core(TM) i5-3350P CPU @ 3.10GHz (Intel)

1 CPU x 4 cores. 4 logical processors (x64)

GPU (Primary): (NVIDIA), CUDA: (up to: ), Compute: , cuDNN: 8.9.6

System RAM: 8 GiB

Platform: Linux

BuildConfig: Release

Execution Env: Docker

Runtime Env: Production

Runtimes installed:

.NET runtime: 7.0.17

.NET SDK: Not found

Default Python: 3.10.12

Go: Not found

NodeJS: Not found

Rust: Not found

Video adapter info:

System GPU info:

GPU 3D Usage 0%

GPU RAM Usage 0

Global Environment variables:

CPAI_APPROOTPATH = <root>

CPAI_PORT = 32168

01:18:13:System: Docker (be3d4b306206)

01:18:13:Operating System: Linux (Ubuntu 22.04)

01:18:13:CPUs: Intel(R) Core(TM) i5-3350P CPU @ 3.10GHz (Intel)

01:18:13: 1 CPU x 4 cores. 4 logical processors (x64)

01:18:13:GPU (Primary): NVIDIA GeForce GT 1030 (2 GiB) (NVIDIA)

01:18:13: Driver: 535.171.04, CUDA: 12.2 (up to: 12.2), Compute: 6.1, cuDNN: 8.9.6

01:18:13:System RAM: 8 GiB

01:18:13:Platform: Linux

01:18:13:BuildConfig: Release

01:18:13:Execution Env: Docker

01:18:13:Runtime Env: Production

01:18:13:Runtimes installed:

01:18:13: .NET runtime: 7.0.17

01:18:13: .NET SDK: Not found

01:18:13: Default Python: 3.10.12

01:18:13: Go: Not found

01:18:13: NodeJS: Not found

01:18:13: Rust: Not found

01:18:13:App DataDir: /etc/codeproject/ai

01:18:13:Video adapter info:

01:18:13:STARTING CODEPROJECT.AI SERVER

01:18:13:RUNTIMES_PATH = /app/runtimes

01:18:13:PREINSTALLED_MODULES_PATH = /app/preinstalled-modules

01:18:13:DEMO_MODULES_PATH = /app/demos/modules

01:18:13:MODULES_PATH = /app/modules

01:18:13:PYTHON_PATH = /bin/linux/%PYTHON_NAME%/venv/bin/python3

01:18:13:Data Dir = /etc/codeproject/ai

01:18:14:Server version: 2.6.2

01:18:14:Overriding address(es) 'http://+:32168, http://+:5000'. Binding to endpoints defined via IConfiguration and/or UseKestrel() instead.

01:18:17:

01:18:17:Module 'Object Detection (YOLOv8)' 1.4.3 (ID: ObjectDetectionYOLOv8)

01:18:17:Valid: True

01:18:17:Module Path: <root>/modules/ObjectDetectionYOLOv8

01:18:17:AutoStart: True

01:18:17:Queue: objectdetection_queue

01:18:17:Runtime: python3.8

01:18:17:Runtime Loc: Local

01:18:17:FilePath: detect_adapter.py

01:18:17:Start pause: 1 sec

01:18:17:Parallelism: 0

01:18:17:LogVerbosity:

01:18:17:Platforms: all

01:18:17:GPU Libraries: installed if available

01:18:17:GPU Enabled: enabled

01:18:17:Accelerator:

01:18:17:Half Precis.: enable

01:18:17:Environment Variables

01:18:17:APPDIR = <root>/modules/ObjectDetectionYOLOv8

01:18:17:CUSTOM_MODELS_DIR = <root>/modules/ObjectDetectionYOLOv8/custom-models

01:18:17:MODELS_DIR = <root>/modules/ObjectDetectionYOLOv8/assets

01:18:17:MODEL_SIZE = medium

01:18:17:USE_CUDA = True

01:18:17:YOLO_VERBOSE = false

01:18:17:

01:18:17:Started Object Detection (YOLOv8) module

01:18:20:Server: This is the latest version

01:19:17:Response rec'd from Object Detection (YOLOv8) command 'custom' (...b24558)

01:19:19:Response rec'd from Object Detection (YOLOv8) command 'custom' (...9d1fa9)

-----I CLICKED "GET MODELS" NOW, DIDN'T CHANGE ANYTHING ELSE------------

01:20:38:Object Detection (YOLOv8): Detecting using ipcam-general

01:20:38:Object Detection (YOLOv8): Detecting using ipcam-general

01:20:45:Response rec'd from Object Detection (YOLOv8) command 'custom' (...ac7e57) ['No objects found'] took 7023ms

01:20:45:Object Detection (YOLOv8): Detecting using ipcam-general

01:20:45:Response rec'd from Object Detection (YOLOv8) command 'custom' (...a4f838) ['No objects found'] took 6594ms

01:20:45:Object Detection (YOLOv8): Detecting using ipcam-general

01:20:45:Response rec'd from Object Detection (YOLOv8) command 'custom' (...3b0206) ['No objects found'] took 144ms

01:20:45:Object Detection (YOLOv8): Detecting using ipcam-general

01:20:45:Response rec'd from Object Detection (YOLOv8) command 'custom' (...2e1ffa) ['No objects found'] took 88ms

|

|

|

|

|

AgentDVR has an update couple days ago (5.5.2) and one of the update information for 5.5.2 is "Add check for custom models to AI code to work around bug in CPAI". Perhaps that handles it?

|

|

|

|

|

|

Posted the wrong data earlier.

I added this error check in the YOLOv8's adapter.py and seems to be working:

elif data.command == "custom": # Perform custom object detection

# Check if there are any custom models available

if not self.custom_model_names:

# Load the custom models if they haven't been loaded yet

self._list_custom_models()

# After attempting to load, check again if there are any custom models available

if not self.custom_model_names:

# If still no custom models are found, return an error response

return { "success": False, "error": "No custom models found" }

Original code checks if there are custom models, and if there aren't, you get the error "No custom models found" right away. This handling is designed so that if it does not find any custom models, it will try to load the custom models and then re-check them again. This is a workaround for the fact that custom models are not being loaded initially, causing an error.

Hope you guys don't mind I modify your code. I can remove it if that is a problem for you guys.

|

|

|

|

|

So, I am trying to get Blue Iris to alert only on certain vehicles. I live in a cul-de-sac, so people make u-turns all the time. What I would like, is for only certain vehicles to confirm the alerts. I figure, I will have to train a model on which vehicles I would like to confirm with, but also, if I could just do certain colors, that would send me in the right direction for now. Is there a way to confirm an alert if say a red car drives by?

I realize you will probably ask, well, don't you want the strangers cars to alert you as well? Not so much. This is more for like automation confirmation and back-up than anything. But also, with this, I can set a rule to have certain alerts not trigger when my moms silver car arrives. Like, if her car arrives, and then the front door cameras detect motion, "Hey! Mom's Here!" Something like that. And no, I am not a 15 year old kid trying to make sure mom doesn't catch me doing something wrong,  , I'm coming up on 40, and just like when my mom visits. , I'm coming up on 40, and just like when my mom visits.

Are there tutorials out there with how to train a model, or anything about specific colors confirming alerts. Some things are just hard to search for, you know?

|

|

|

|

|

I am new to machine learning and stuff.

I wanted to do custom object detection in C# using webcam at runtime.

The thing is as per client request I did this through roboflow but client wanted fully offline due to no internet and data safety reasons since roboflow did not provide this feature, I looked into yolo. Although roboflow fulfilled object detection but offline feature meant I had to find some other way to do this. To try new method I downloaded small public dataset for balloons from roboflow in yolov5pytorch format. this dataset has blue and red balloons as classes.

Now I just needed to train this and implement it in C# Winform project.

I opened google collab yolov5 to train my dataset, I followed each step and after training I tested it on video in google collab and it was detecting red and blue balloons.

Now my question was how to implement this custom trained model in my C# code for object detection.

Since I have yolov5 custom trained model which tutorial to follow to implement this.

I tried to download yolov5 in onnx format and tried it with ml.net but did not understand,followed the tutorial where the guy was working with custom vision onnx model so followed each step which failed. I do not understand all depth of how it is working.

So now I am stuck with trained model in yolov5 in google colab. What steps should I follow or any tutorial link to integrate this in my c# project?

Also can I use this trained yolov5 model in python and intergrate python script in c#. Remember it has to detect at runtime and offline.

What I have tried:

Tried roboflow (no offline feature)

Trained custom dataset on google colab and downloaded onnx model of yolov5 and implement it in winform project with help of ml.net.

|

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin

, I'm coming up on 40, and just like when my mom visits.

, I'm coming up on 40, and just like when my mom visits.