|

Thanks very much for the report. When you test Object Detection (Coral) in the CodeProject.AI Server Explorer, does it work? Do you get any errors?

Thanks,

Sean Ewington

CodeProject

|

|

|

|

|

In the CodeProject.AI Server Explorer its work flawlessly. (143ms)

|

|

|

|

|

AgentDVR log.txt:

23:29:54 TmrBroadcastElapsed: Removed 1 rtc sessions

23:31:54 CAM1: SetFailed: Unable to perform detection at CoreLogic.AI.ObjectRecognizer.<detect>d__6.MoveNext()

23:31:54 CAM1: SetFailed: Will retry Object Recognition in 30 seconds.

23:31:54 CAM1: OpenWriter: StartSaving

23:31:54 CAM1: .ctor: Using stream timestamps for this recording

23:31:54 Open: OPEN RECORD

23:31:54 Open: written header

23:31:54 CAM1: Open: Recording (Raw Writer CoreLogic.Sources.Combined.RawMonitor)

23:31:54 CAM1: WarnClock: Dropped packet as out of order - set Use System Clock on recording tab if you have problems

23:31:55 CAM1: RecorderRecordingOpened: Recording Opened

23:32:33 CAM1: RecorderRecordingClosed: Recording Closed

23:32:33 CAM1: Close: Record stop

23:32:40 CAM1: SetFailed: Unable to perform detection at CoreLogic.AI.ObjectRecognizer.<detect>d__6.MoveNext()

23:32:40 CAM1: SetFailed: Will retry Object Recognition in 30 seconds.

23:32:41 CAM1: OpenWriter: StartSaving

23:32:41 CAM1: .ctor: Using stream timestamps for this recording

23:32:41 Open: OPEN RECORD

23:32:41 Open: written header

23:32:41 CAM1: Open: Recording (Raw Writer CoreLogic.Sources.Combined.RawMonitor)

23:32:41 CAM1: WarnClock: Dropped packet as out of order - set Use System Clock on recording tab if you have problems

23:32:41 CAM1: RecorderRecordingOpened: Recording Opened

23:32:46 CAM2: SetFailed: Unable to perform detection at CoreLogic.AI.ObjectRecognizer.<detect>d__6.MoveNext()

23:32:46 CAM2: SetFailed: Will retry Object Recognition in 30 seconds.

23:32:46 CAM2: OpenWriter: StartSaving

23:32:46 CAM2: .ctor: Using stream timestamps for this recording

23:32:46 Open: OPEN RECORD

23:32:46 Open: written header

23:32:46 CAM2: Open: Recording (Raw Writer CoreLogic.Sources.Combined.RawMonitor)

23:32:46 CAM2: RecorderRecordingOpened: Recording Opened

|

|

|

|

|

"Unable to perform detection" is an error message coming back from CPAI.

Check the CPAI logs after Agent tries to send an image in.

|

|

|

|

|

I see this in the Codeproject.AI Dashboard, if I switch the logging level to trace:

07:11:43:Object Detection (Coral): Retrieved objectdetection_queue command 'detect'

07:11:43:Response received (#reqid f65e9036-0dc6-4d7d-a8ac-042d2b2c02a2 for command detect): No objects found

07:11:43:Object Detection (Coral): Rec'd request for Object Detection (Coral) command 'detect' (...2c02a2) took 135ms

07:11:44:Client request 'detect' in queue 'objectdetection_queue' (...ba08ac)

07:11:44:Request 'detect' dequeued from 'objectdetection_queue' (...ba08ac)

07:11:44:Object Detection (Coral): Retrieved objectdetection_queue command 'detect'

07:11:44:Response received (#reqid 56d0fd97-59ab-4e36-baa5-2eead4ba08ac for command detect): No objects found

07:11:44:Object Detection (Coral): Rec'd request for Object Detection (Coral) command 'detect' (...ba08ac) took 138ms

Even if its looks good, the AgentDVR said: AI down. So the problem still persist.

|

|

|

|

|

in Agent DVR if you go to Server Settings - Logging and set logging to Debug it'll show you the response from CPAI in the logs.

|

|

|

|

|

Let me know if you get this sorted out or if there's something our end you need fixed.

cheers

Chris Maunder

|

|

|

|

|

07:02:19 Cam1: Process: Recognize Objects

07:02:19 Cam1: Detect: {"message":"No objects found","count":0,"predictions":[],"success":true,"processMs":133,"inferenceMs":128,"moduleId":"ObjectDetectionCoral","moduleName":"Object Detection (Coral)","code":200,"command":"detect","executionProvider":"TPU","canUseGPU":false,"statusData":{"successfulInferences":24025,"failedInferences":79,"numInferences":24104,"numItemsFound":514,"averageInferenceMs":128.10131113423517,"histogram":{"baseball bat":10,"bird":3,"person":115,"microwave":24,"umbrella":1,"boat":5,"train":10,"car":288,"bench":28,"sheep":2,"knife":3,"toilet":1,"airplane":2,"cat":1,"suitcase":6,"fire hydrant":1,"bus":1,"clock":1,"cell phone":7,"dog":2,"mouse":2,"traffic light":1}},"analysisRoundTripMs":139,"processedBy":"localhost"}

07:02:20 Cam2: Process: Recognize Objects

07:02:20 Cam1: Process: Recognize Objects

07:02:20 Cam1: Detect: {"success":false,"predictions":[],"message":"","error":"Unable to perform detection","count":0,"processMs":4,"inferenceMs":0,"moduleId":"ObjectDetectionCoral","moduleName":"Object Detection (Coral)","code":500,"command":"detect","executionProvider":"TPU","canUseGPU":false,"statusData":{"successfulInferences":24025,"failedInferences":80,"numInferences":24105,"numItemsFound":514,"averageInferenceMs":128.10131113423517,"histogram":{"baseball bat":10,"bird":3,"person":115,"microwave":24,"umbrella":1,"boat":5,"train":10,"car":288,"bench":28,"sheep":2,"knife":3,"toilet":1,"airplane":2,"cat":1,"suitcase":6,"fire hydrant":1,"bus":1,"clock":1,"cell phone":7,"dog":2,"mouse":2,"traffic light":1}},"analysisRoundTripMs":9,"processedBy":"localhost"}

07:02:20 Cam1: Failed: AI Failure count at 1

07:02:20 Cam1: SetFailed: Unable to perform detection at CoreLogic.AI.ObjectRecognizer.<Detect>d__6.MoveNext()

07:02:20 Cam1: SetFailed: Will retry Object Recognition in 30 seconds.

07:02:20 Cam2: Detect: {"message":"No objects found","count":0,"predictions":[],"success":true,"processMs":134,"inferenceMs":129,"moduleId":"ObjectDetectionCoral","moduleName":"Object Detection (Coral)","code":200,"command":"detect","executionProvider":"TPU","canUseGPU":false,"statusData":{"successfulInferences":24026,"failedInferences":80,"numInferences":24106,"numItemsFound":514,"averageInferenceMs":128.10134853908266,"histogram":{"baseball bat":10,"bird":3,"person":115,"microwave":24,"umbrella":1,"boat":5,"train":10,"car":288,"bench":28,"sheep":2,"knife":3,"toilet":1,"airplane":2,"cat":1,"suitcase":6,"fire hydrant":1,"bus":1,"clock":1,"cell phone":7,"dog":2,"mouse":2,"traffic light":1}},"analysisRoundTripMs":140,"processedBy":"localhost"}

07:02:20 StartSaving: From Alert: True, From AI Alert: False

07:02:20 Cam1: OpenWriter: StartSaving

07:02:20 Cam1: .ctor: Using stream timestamps for this recording

07:02:20 Open: OPEN RECORD

07:02:20 Open: written header

07:02:20 Cam1: Open: Recording (Raw Writer CoreLogic.Sources.Combined.RawMonitor)

07:02:20 Cam1: RecorderRecordingOpened: Recording Opened

07:02:21 Cam2: Process: Recognize Objects

07:02:21 Cam2: Detect: {"message":"No objects found","count":0,"predictions":[],"success":true,"processMs":131,"inferenceMs":126,"moduleId":"ObjectDetectionCoral","moduleName":"Object Detection (Coral)","code":200,"command":"detect","executionProvider":"TPU","canUseGPU":false,"statusData":{"successfulInferences":24027,"failedInferences":80,"numInferences":24107,"numItemsFound":514,"averageInferenceMs":128.10126108128355,"histogram":{"baseball bat":10,"bird":3,"person":115,"microwave":24,"umbrella":1,"boat":5,"train":10,"car":288,"bench":28,"sheep":2,"knife":3,"toilet":1,"airplane":2,"cat":1,"suitcase":6,"fire hydrant":1,"bus":1,"clock":1,"cell phone":7,"dog":2,"mouse":2,"traffic light":1}},"analysisRoundTripMs":136,"processedBy":"localhost"}

07:02:22 Cam2: Process: Recognize Objects

07:02:22 Cam2: Detect: {"message":"No objects found","count":0,"predictions":[],"success":true,"processMs":132,"inferenceMs":127,"moduleId":"ObjectDetectionCoral","moduleName":"Object Detection (Coral)","code":200,"command":"detect","executionProvider":"TPU","canUseGPU":false,"statusData":{"successfulInferences":24028,"failedInferences":80,"numInferences":24108,"numItemsFound":514,"averageInferenceMs":128.10121524887631,"histogram":{"baseball bat":10,"bird":3,"person":115,"microwave":24,"umbrella":1,"boat":5,"train":10,"car":288,"bench":28,"sheep":2,"knife":3,"toilet":1,"airplane":2,"cat":1,"suitcase":6,"fire hydrant":1,"bus":1,"clock":1,"cell phone":7,"dog":2,"mouse":2,"traffic light":1}},"analysisRoundTripMs":137,"processedBy":"localhost"}

Really interesting.

Sometimes it works, sometimes it doesn't.

|

|

|

|

|

definitely an error on CPAI's side of things

Detect: {"success":false,"predictions":[],"message":"","error":"Unable to perform detection","count":0,"processMs":4,"inferenceMs":0,"moduleId":"ObjectDetectionCoral","moduleName":"Object Detection (Coral)","code":500,"command":"detect","executionProvider":"TPU","canUseGPU":false,"statusData":{"successfulInferences":24025,"failedInferences":80,"numInferences":24105,"numItemsFound":514,"averageInferenceMs":128.10131113423517,"histogram":{"baseball bat":10,"bird":3,"person":115,"microwave":24,"umbrella":1,"boat":5,"train":10,"car":288,"bench":28,"sheep":2,"knife":3,"toilet":1,"airplane":2,"cat":1,"suitcase":6,"fire hydrant":1,"bus":1,"clock":1,"cell phone":7,"dog":2,"mouse":2,"traffic light":1}},"analysisRoundTripMs":9,"processedBy":"localhost"}

|

|

|

|

|

I'm hoping to have a new version of the Coral module out today that will provide better error information for this case.

cheers

Chris Maunder

|

|

|

|

|

So what can I do? The problem is still presist. Thank you guys!

|

|

|

|

|

I assume that 2.5.1 is an actual production version since it is not marked as -alpha, -beta, rc etc.

Congratulations!

Installed the new version, and the install went smoothly.

Installed the Coral module, and that module is working well with Blue Iris and my M.2 Coral accelerator.

Thanks for all the hard work.

P.S. The Coral response times are faster by a factor of 2? i.e. where I was getting ~ 120 - 150 ms with ver 2.4.7, I am now getting 70 to 90 ms with 2.5.1.

|

|

|

|

|

Great, good to hear! Do you have the multi-TPU code enabled?

|

|

|

|

|

No.

Module 'Object Detection (Coral)' 2.1.1 (ID: ObjectDetectionCoral)

Valid: True

Module Path: <root>\modules\ObjectDetectionCoral

AutoStart: True

Queue: objectdetection_queue

Runtime: python3.9

Runtime Loc: Local

FilePath: objectdetection_coral_adapter.py

Pre installed: False

Start pause: 1 sec

LogVerbosity:

Platforms: all

GPU Libraries: installed if available

GPU Enabled: enabled

Parallelism: 1

Accelerator:

Half Precis.: enable

Environment Variables

CPAI_CORAL_MULTI_TPU = false

CPAI_CORAL_USE_YOLO = true

MODELS_DIR = <root>\modules\ObjectDetectionCoral\assets

MODEL_SIZE = medium

Status Data: {

"successfulInferences": 578,

"failedInferences": 225,

"numInferences": 803,

"numItemsFound": 673,

"averageInferenceMs": 76.33391003460207,

"histogram": {

"car": 545,

"person": 76,

"truck": 44,

"bus": 7,

"train": 1

}

}

Started: 26 Jan 2024 8:24:55 AM Central Standard Time

LastSeen: 26 Jan 2024 9:24:52 AM Central Standard Time

Status: Started

Requests: 1372 (includes status calls)

Provider: TPU

CanUseGPU: False

HardwareType: GPU

|

|

|

|

|

Interesting, the non-multi-TPU code should be the same as before. I wonder if Chris will have any suggestions. If you enable the multi-TPU code you’re likely to see better concurrency and throughput, but I wouldn’t expect your timing numbers to improve. I wonder if the model that you are running is the same?

modified 26-Jan-24 11:04am.

|

|

|

|

|

I was going to blame you, Seth, for the speed kick but I actually suspect it's due to the use of the edgeTPU library rather than simply using the pycoral python code. Another possibility is he's using a different model than previous. There's a huge speed difference between MobileNet and EfficientDet.

cheers

Chris Maunder

|

|

|

|

|

Yeah. That’s the funny thing: I don’t think the new code will report numbers all that much better than the old code. It’s still doing the same things in the same order.

The new code will, however, handle a bajillion concurrent inferences. While one thread is running inference, another can be resizing. And another can be interpreting the results. And yet another can be running on a second TPU. And if someone is running with tiling, they might even be seeing slower speeds as it’s doing twice (or more) as much inference work in parallel.

Pillow-simd, on the other hand, will give better numbers. Maybe you’re right about the edgeTPU library? Maybe it’s compiled better than the default? Or maybe it’s just a different model.

|

|

|

|

|

Phase of the moon. I swear...

cheers

Chris Maunder

|

|

|

|

|

New version of CPAI is out. Does it work better for you?

|

|

|

|

|

Installed ver. 2.5.4 last night.

Was running Yolov5.Net before because I was getting thousands of failed inferences with the Coral version in 2.5.1. The Yolov5.Net was not seeing the Intel 630 GPU, so I tried to uninstall, reinstall the 2.5.1 version, now I am back to the Error 1057, and can't get the CPAI service to run on the Windows 10 machine. Uninstalled and reinstalled several times, both versions still no good on the Windows machine. I had this before, I don't remember what I did.

Luckily, I have 2.5.1 Docker running on an Ubuntu machine, so I pointed the Blue Iris instance to that CPAI server. I'll mess with it again when I have some time.

Actually, I noticed that in the install of the 2.5.4, there is a checkbox to delete the existing detection modules. And of course I have a tendency to just check everything, so I blew away what was working and started over.

I will have to work on this to try to get it working again.

|

|

|

|

|

Now uninstalled CPAI ver. 2.5.1, deleted AI folders in ProgramFiles and ProgramData, and installed CPAI 2.5.4.

Service is started, Coral is installed, set to Multi TPU, Small Model size, getting inferences of 7 ms, my Garage is now a truck, etc.

Fast, but not very accurate.

Changed to medium model size. same accuracy.

A question, maybe you know. Do you use Blue Iris with this? Or are you using it for something else?

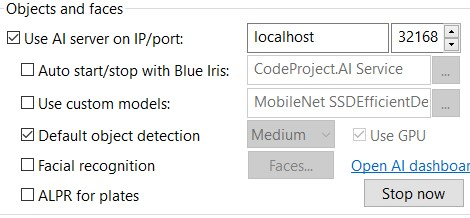

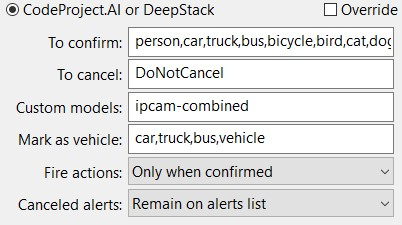

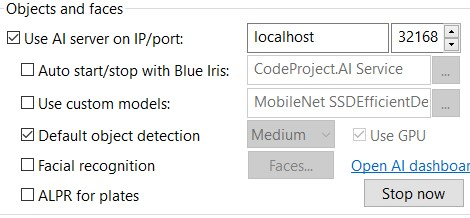

In Blue Iris, in general settings, you can set the program to use either Custom or default objects.

My understanding is that this is how the program will create the http request to CPAI, i.e. how it is formated.

In the camera settings, there is a textbox where you enter the custom model name.

So, the model names that CPAI sends to Blue Iris are MobileNet SSD, EfficientDet, and Yolo5.

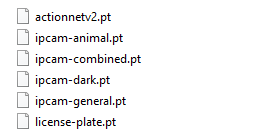

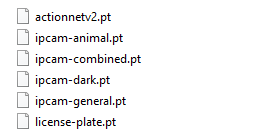

With the Yolo versions of the detection modules, the names of the custom models are the file names that are in the custom-models folder.

But under the Coral module folder, there is no Custom-models folder.

But Code Project does send names of custom models to Blue Iris responding to the list-models call.

So is it possible to correctly enter a model name in the camera Custom models field when using the Coral detection module?

I have tried with various spellings, and CPAI returns nothing.

Yet in the explorer, you can use Custom models, select the model, and get results.

I have also compared the labels for MobileNet SSD, and they seem to be the same as the 80 COCO labels.

So it seems to me that there is no point to trying to use any custom model with the Coral detection module, to only use Default Object Detection.

So I am stuck with trains, toilets, elephants, TVs, cows, etc.

Sorry for the novel. I've just been wondering about the model list sent to Blue Iris.

|

|

|

|

|

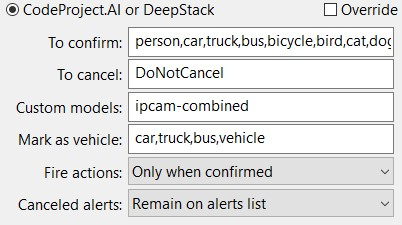

The Coral module does not have any of the ipcam custom models only the Object Detection (YOLOv5 .NET) and Object Detection (YOLOv5 6.2) modules have the ipcam custom models

|

|

|

|

|

Cancel that. rebooted the machine, Code Project AI server service will not start.

|

|

|

|

|

OK, I got it.

Turns out I broke it.

My Ubuntu machine hostname is "Ubuntu".

In the appsetting.json file, in order the try to get the mesh function to work, I entered "Ubuntu" in the Mesh Options/ KnownMeshHostnames field.

I tried to run CodeProjectAI Server.exe.

I recieved "U" is not an acceptable <something>.

So I removed the word Ubuntu from KnownMeshHostnames, saved the appsettings.json file and the service started.

I love software!!!

|

|

|

|

|

lol. Of course it was the U! /s

|

|

|

|

|

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin