This is a comprehensive guide to web scraping using various Python libraries, starting from the basics. It covers topics such as using the urllib.request library for basic scraping, scraping images, using the requests library for more advanced scraping, handling user agents, and parsing HTML using the BeautifulSoup library. An example of scraping a website and an explanation of scraping pagination is also given.

IMPORTANT: This article has been improved so very much and the selenium tutorial has been added to the article. Filling up the entry boxes, clicking the buttons and automating many things have been added to this. However, I do not have a good English foundation, so the grammar and punctuation is not good. I have made this entire article (source) open source at Github ^ in the name source.html. If anyone is interested, please help in formatting this. Thank you! One of the comments says this is a basic article and now it is becoming a false statement.

Introduction

This article is meant for learning web scraping using various libraries available from Python. If you are good with Python, you can refer to this article. It is a complete guide started from scratch.

Note: I stick with 3.x version which guarantees future usage.

Background

For some who have never heard about web-scraping.

Consider this situation - a person wants to print two numbers in a console/terminal in Python. He/she will use something like this:

print("1 2")

So what to do if he/she wants to print about 10 numbers? Well, he/she can use looping. OK, now we can come to our situation if a website contains information about a person and you want it in Excel? What do you do? You will copy the info of a person and add his contact info and other stuff in several rows. What do you do when there is information about 1000 persons? Well, you have to code a bot to do this work.

There are various libraries out there available for Python. I will try to explain all the important stuff to become a fully fledged web scrapper.

Using the Libraries

Using Default urllib.request Library

Python has its own web scraping which may not be easier for several advanced scraping, however useful for basic scraping. There is a library named requests which is the best alternative and most stable than this, so I will cover more in requests than here.

OK, open up your favourite Python editor and import this library.

[code1]

import urllib.request

and type the following code:

import urllib.request

source = urllib.request.urlopen("http://www.codeproject.com")

print(source)

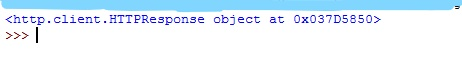

Here, urllib.request.urlopen gets the web-page. Now, when we execute this program, we will get something like this:

Let's have a closer look at this output. We got a response object at an address. So here, we have actually got an address. http.client.HTTPResponse is a class. From that, we have used an object and it returned an address. so, in order to see the value, we will use a pointer to see what is actually in it. Modify the print statement above to pointer as:

print(*source)

Now when executing the code, you will see something like this:

You may ask, hey what is this? Well, this is the HTML source code of the web link you are requested for.

On seeing this, a common thought will arise for every one, oh yeah! I got the HTML code now I can use regular expressions to get what I want :) but you should not. There is a specific parsing library available which I will explain later.

[code2]

The same can be achieved without using a pointer. just replace *source with source.read().

Scraping the Images

Ok, now how to scrape the images using Python? Now let's take a website, we can take this very own site CodeProject not meant for commercial use. Open CodeProject's home page, you will see their company logo, right click their logo and select view image, you will see this:

OK, now get the web-link https://codeproject.global.ssl.fastly.net/App_Themes/CodeProject/Img/logo250x135.gif

Note the last letters which say the extension of the image which is gif in our case. Now we can save this into our disk.

Python has a module named urllib.request which can be seen in request.py and it has a member function named urlretrieve which is used to save a file locally from a network and we are going to use it to save our images.

[code 3]

import urllib.request

source = urllib.request.urlretrieve

("https://codeproject.global.ssl.fastly.net/App_Themes/CodeProject/Img/logo250x135.gif",

"our.gif")

The above code will save the image to the location where Python file is located. The first argument is url and the second is the file name. Refer to the syntax in code.

That's it for urllib. We need to shift with requests. It is important since I found it is more stable. Everything which can be done with urllib can be done with requests.

Requests

Requests will not come along with Python. You need to install it. To install this, just run the below pip command.

PIP COMMAND:

pip install requests

or you can use the regular method of installing from source. I leave it to you. Try importing requests to check whether requests has been installed successfully.

import requests

You should not get any errors while importing this statement.

We will try this code:

[code 4]

import requests

request = requests.get("https://www.codeproject.com")

print(request)

Here, we will have a look at line 3 (I am counting from 1) in this line request is a variable and requests is a module which has a member function named get which we passed our web-link as an argument. This code will generate this output:

So it is nothing but a http status code which says it is successful.

[code 5]: modify the variable present in the print statement to this => request.content the output will be the contents of the web-page which is nothing but an HTML source.

What is a user-agent?

In Networking, while transmitting a data from source to destination, the data is split up into smaller chunks called packets, this is a simple definition of a packets in internet. Usually, the packet headers consist of several information about the source and the destination. We will only analyse packet headers which are useful for web-scraping.

I will show you why this is important, first, we will fire up our own server and make it listen on local machine i.p 127.0.0.1 @ port 1000, here instead of connecting to code project we will connect to this server using http://127.0.0.1:1000, and you will see something like this on server:

Let's have a closer look at the message, when we are connecting to the server using [code 6] (It is same as that of code 4. I've just changed the destination address) you will find the user-agent as python-requests with its version and other details.

This user-agent reveals that the request is from machine and not from a human, so some advanced websites will block you from scraping. What do we do now?

Changing the user-agent

This is our code 6.

import requests

request = requests.get("http://127.0.0.1:1000")

print(request.content)

We will add custom headers to our above code.

Open up this link in Wikipedia for user agent https://en.wikipedia.org/wiki/User_agent and you will find an example user agent there, ok we will use it for your convenience. I will show the example present there.

User agent present in wikipedia example:

Mozilla/5.0 (iPad; U; CPU OS 3_2_1 like Mac OS X; en-us)

AppleWebKit/531.21.10 (KHTML, like Gecko) Mobile/7B405

Python dictionary is used for adding the user agent and key = User-Agent ; value = any user agents for example we will take the above.

So our code will be something like this [code 7]

agent = {'User-Agent': 'Mozilla/5.0 (iPad; U; CPU OS 3_2_1 like Mac OS X; en-us)

AppleWebKit/531.21.10 (KHTML, like Gecko) Mobile/7B405'}

Ok, now we can add this dictionary while requesting to change the user agent:

request = requests.get("http://127.0.0.1:1000",

headers=agent)

On execution, you will see the user-agent gets changed from python-requests to Mozilla Firefox, How do I believe? See the screenshot below:

What have we done so far? We have only got the page source using different methods so we will now gather the data, let's get started!

Library 3: Beautifulsoup: pip install beautifulsoup4

So what is beautiful soup? Is it a scraping library? Actually beautifulsoup is a parsing library which is used to parse HTML.

HTML? Yes HTML, remember all the above methods are used to get the page source which is nothing but an HTML source.

TARGET SCRAPING WEBSITE: https://www.yellowpages.com.au/search/listings?clue=Restaurants&locationClue=&lat=&lon=&selectedViewMode=list

IMPORTANT: I am using this website just as an example for educational purposes nothing more, I've used this website since it has clear layout with pagination which stands as the best example for scraping other sites which you have permission. I've warned you scraping may lead to penalty if used wrongly. Don't involve me. :)

Ok let's get into the topic:

IMPORTANT

Basics Tags in HTML which are mostly used for website scraping:

Tags: (Pocket hints)

<title> </title> => adds title to the webpage

<p> </p> => Paragraph

<a href="someLink"> </a> =>Links

<h(x)> </h(x)> => Heading tags

and some other tags like div - container and so on, This is not an html tutorial anyways,

[How to find out whether we are on the safer side of scraping - identifying the site is not allowing us to scrape]:

The structure of the website is something like this:

What we are going to do is we are going to scrape all the bold letters (e.g., Royal India Restaurant which is seen in the picture.)

STEPS

Right click the bold words in the website (Royal India Restaurant) and select inspect element. You will see something like this:

So we have got the proper HTML tag. Have a look at it - you will find something like this.

<a class="class name" .... here a means link as I have explained in pocket hints. so the bold letters are link which belongs to a class named "listing-name". So can you guess now how to get all the bold names???

Answer: Scraping all the links which belongs to this class name will give us all the names of those restaurants.

Alright, we will write a script to scrape all the links first. To get the HTML source, I am going to use requests and to parse the HTML, I am going to use BeautifulSoup.

Ok you may find this code will display the page content.

[code 9]

import requests

from bs4 import BeautifulSoup

if __name__=="__main__":

req = requests.get("https://www.yellowpages.com.au/search/listings?

clue=Restaurants&locationClue=&lat=&lon=&selectedViewMode=list")

soup = BeautifulSoup(req.content,"html.parser")

print(soup)

NO, this will not display the page source, the output will be something like this:

Quote:

We value the quality of content provided to our customers, and to maintain this, we would like to ensure real humans are accessing our information.

.

.

.

<form action="/dataprotection" method="post" name="captcha">

Why did this happen?

This page appears when online data protection services detect requests coming from your computer network which appear to be in violation of our website's terms of use.

I told you in the real word scraping the requests coming from Python will get blocked. Of course, we are all violating their terms and conditions, but this can be bypassed easily by adding user agent to it, I have added the user agent in [code 9] and when you run the code, this code will work and we will get the page source. So we have now found that we are violating their terms and conditions and we should not scrape further. So I have ended this here just by showing the scraped names in page1 of the website!.

Below are the ways to break security - for educational purpose only.

So now our modified [code 9]:

import requests

from bs4 import BeautifulSoup

if __name__=="__main__":

agent = {'User-Agent': 'Mozilla/5.0 (iPad; U; CPU OS 3_2_1 like Mac OS X;

en-us) AppleWebKit/531.21.10 (KHTML, like Gecko) Mobile/7B405'}

req = requests.get("https://www.yellowpages.com.au/search/listings?

clue=Restaurants&locationClue=&lat=&lon=&selectedViewMode=list",headers=agent)

soup = BeautifulSoup(req.content,"html.parser")

for i in soup.find_all("a",class_="listing-name"):

print(i.text)

will yield this:

I have ended the scraping here and the website has not been scrapped further more. I strongly recommend you to do the same so that no one will be affected.

One Complete Scraping Example for Familiarizing the Scraping

Target site: https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&ns=1

Before getting into scraping this website, I would like to explain the general layouts which may be seen in the websites. Once the layouts is identified, we can code according to it.

1. Information in one long lengthy page

If this is our case, then it is easier for us to write the script which scrapes for the single page alone.

2. Pagination

If a website has a pagination layout, the web site will have multiple pages like page1, page2, page3 and so on.

Our example scraping website does have a pagination layout, follow the target site https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&ns=1

and scroll down, you will see something like this:

So that is pagination. In this case, we need to write a script to go to every single page and scrape the information will explain more about scraping pagination below.

3. AJAX Spinner

We need to use selenium to get the job done for these types of websites and I will also explain how to use selenium in further/same article(s).

Explanation for scraping pagination for the above link: https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&ns=1

What we are going to do for this case is we are going to scrape all the available page links first (see the above image), you will find something like this 1,2,3,...... Next> all those are links <a> tag in HTML, but don't scrape for those links. If you scrape for those links, then this will happen. You will get the page link for page 1,2,3...9 but not for further pages since you have Next > link blocking the further links there.

Run the below code and see the output [code 10]:

def Scrape(weblink):

r = requests.get(weblink)

soup = BeautifulSoup(r.content,"html.parser")

for i in soup.find_all("a",class_="available-number pagination-links_anchor"):

print("https://www.yelp.com"+i.get("href"))

print(i.text)

You will only get the output for the first 9 pages so in order to get the links of all pages, what we are going to do is we are going to scrape the next links.

Go to the link and inspect the next link:

So you will find that the next link belongs to a class named u-decoration-none next pagination-links_anchor.

Scraping for the link will give you the next link for the page so if you are scraping for page 1, it will give the link for page 2, if you are scraping for page 2, then will you link for page 3, does it make any sense?

RECUSION...! :)

def scrape(weblink):

r = requests.get(weblink)

soup = BeautifulSoup(r.content,"html.parser")

for i in soup.find_all("a",class_="u-decoration-none next pagination-links_anchor"):

print("https://www.yelp.com"+i.get("href"))

scrape("https://www.yelp.com"+i.get("href"))

Now we can do whatever we want.

We will scrape the names of all restaurants as an example.

def scrape(weblink):

print(weblink)

r = requests.get(weblink)

soup = BeautifulSoup(r.content,"html.parser")

for i in soup.find_all("a",class_="biz-name js-analytics-click"):

print(i.text)

for i in soup.find_all("a",class_="u-decoration-none next pagination-links_anchor"):

print("https://www.yelp.com"+i.get("href"))

scrape("https://www.yelp.com"+i.get("href"))

This will give output something like this:

Quote:

https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&ns=1

Extreme Pizza

B Patisserie

Cuisine of Nepal

ABV

Southern Comfort Kitchen

Buzzworks

Frances

The Morris

Tacorea

No No Burger

August 1 Five

https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&start=10

https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&start=10

Extreme Pizza

Gary Danko

Italian Homemade Company

Nopa

Sugarfoot

Big Rec Taproom

El Farolito

Hogwash

Loló

Kebab King

Paprika

https://www.yelp.com/search?find_desc=Restaurants&find_loc=San+Francisco%2C+CA&start=20

You will find Extreme pizza repeated 2 times. It is nothing but a sponsored advertisement of the hotel which will be displayed as the first for every page. We can write a script to skip the first entry. Conditional statement will do. I don't need to explain this as a beginner could do this.

Using Burp Suite and Firefox Developer Edition to Assist with Complex Post Calls

Before getting started, download burp suite here: https://portswigger.net/burp/communitydownload and note that community edition of burpsuite is more than sufficient. You don't need a pro version unless you go for security testing.

- First go to burp and check if proxy service is enabled.

- Open firefox dev edition to listen on the target proxy network.

- Go to proxy to and capture

get and post requests.

I know it is difficult to follow the three steps above, so I made a video so that it will be easier for you to understand.

Ultimate Selenium Guide

So far, we have used several libraries for some really basic scraping. Now, we are going to use web drivers for complete browser automation and this is going to be really interesting to view and watch...

The best way to install the selenium is by downloading the source https://pypi.python.org/pypi/selenium

OK, after installing the Selenium, test the working of the selenium by importing the web driver:

from selenium import webdriver

You should not get any error if this line is executed. If there are no errors, then we shall get started, from the import module, you will find something like webdriver, yeah we are going to use webdrivers for automating the browsers.

OK, there are various web drivers available out there which do the very same task but I am going to cover only two.

- chrome driver: for real world scraping

- phantomjs: for headless scraping

Chrome Driver

We will see how to use the Chrome driver here now. Once you have tested that chrome driver has installed properly, then do the following things. First of all, it is advised to place the chromedriver in a static location e.g C:\\chromedriver.exe. This is because you can avoid huge memory consumption(literally). I mean that if you are placing it near to your Python scripts, it will work fine but for separate projects, you need to place the chromedriver everywhere this will lead to lot of nuisance.

OK, now have a look at code 11, this code will open Google.

[CODE 11]

from selenium import webdriver

browser = webdriver.Chrome("E:\\chromedriver.exe")

browser.get("https://www.google.com")

The function get will get the websitelink which is passed as an argument! Now we will open and close the browser. In-order to close the browser, we will use the close() method.

syntax for closing the browser:

webdriver.close()

So, adding browser.close() at the end of the code will close the browser.

You can find that the browser closed by the webdriver is not closed. This can be done by using browser.quit() method. [code 12]

Understanding ID, name and css_selectors

ID

The id global attribute defines a unique identifier (ID) which must be unique in the whole document. Its purpose is to identify the element when linking (using a fragment identifier), scripting, or styling (with CSS).

Reference: MDN

See the sample ID here:

OK, now we will try to click the button using an id!

See the sample code project page.

Here, you will find the CANCEL button. Right click on the Cancel button and inspect the element, you will find something like this:

Once, we click on the Cancel button, it is going to redirect us on CodeProject's homepage, so we are going to use selenium to automate the process, ok let's get started! [code - 13]

from selenium import webdriver

if __name__=="__main__":

browser = webdriver.Chrome("E:\\chromedriver.exe")

browser.get("https://www.codeproject.com/Questions/ask.aspx")

cancel_button = browser.find_element_by_id("ctl00_ctl00_MC_AMC_PostEntry_Cancel")

cancel_button.click()

Have a look at this line [cancel_button = browser.find_element_by_id("ctl00_ctl00_MC_AMC_PostEntry_Cancel")] we are finding the element using ID and clicking it. After that, we do nothing so once the button has clicked, then the url redirects to homepage since we have clicked Cancel button.

Understanding Name Tags

Now, open up Google^ and start inspecting the search panel and you will find something like this:

IMPORTANT:

So, far we have opened up a page, clicked the button and got the page source. Now we are going to fill an-entry box so, we need some more attention from now since we are moving from basics to advanced!

Import the keys in-order to send the key-words:

from selenium.webdriver.common.keys import Keys

Ok, we will see an example how we are going automate a google search [code - 14]:

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

browser = webdriver.Chrome("E:\\chromedriver.exe")

browser.get("https://www.google.com")

name = browser.find_element_by_name("q")

keyword = "Codeproject"

name.send_keys(keyword)

So, send_keys(arg) will take the keyword as an argument and will send the keywords in the entry boxes.

Understanding css_selectors

Selectors define to which element the set of CSS rules are to be applied. refer more here: https://developer.mozilla.org/en-US/docs/Web/CSS/CSS_Selectors

[code 15]: In this code will search duckduckgo using css_selectors

from selenium import webdriver

from selenium.webdriver.common.keys import Keys

if __name__ == "__main__":

search_for = "apples"

website = "https://duckduckgo.com/"

browser = webdriver.Chrome()

browser.get(website)

search = browser.find_element_by_css_selector("#search_form_input_homepage")

search.send_keys(search_for+Keys.ENTER)

The easiest way for getting the CSS selector is to use Firefox developer edition:

In the upcoming series, I will update more about the Selenium.

Points of Interest

Final message I would like to convey to you is:

I have not broken any rules on any of the sites and I have not disobeyed any of the websites terms and conditions. I ask the users to use this knowledge wisely for humanity and not for exploiting websites which I will not encourage you to do.

More to come in future articles.

Kindly take a survey. I will cover it for you if this article is a successful one.

General

General  News

News  Suggestion

Suggestion  Question

Question  Bug

Bug  Answer

Answer  Joke

Joke  Praise

Praise  Rant

Rant  Admin

Admin

There are many reasons: Every browser has their own mathematics behind the line heights etc.

There are many reasons: Every browser has their own mathematics behind the line heights etc.